I need to develop an app that recognizes specific colored area of an image and return width of that area, in pixels. When an image upload to the app it should identify (Red color square for example) colored square and return it's width. Is this possible with MIT App Inventor?

Theoretically, it can be done. In practice, it doesn't really work.

Look this topic:

Dear @Patryk_F,

Thank you very much for your reply and your opinion. What you say is correct. My actual requirement is to get the growth of a tree across a time period. I planned to get a photo of the tree with camera placing same distance from the tree. And also sticking specific colored strip around the tree trunk. The app will recognize that strip using it's color. However I also think it's not practical. Do you have any idea to achieve this?

I think the canvas method would be too time consuming and slow for large photos.

What is this red stripe for? Do you want to estimate the height of the tree somehow?

See also this:

https://appinventor.mit.edu/explore/resources/ai/personal-image-classifier

The app recognizes red strip and calculate it's width. Width of the red strip is the width of the tree trunk. So we can calculate diameter of the tree. The trees are not so big. Usually diameter around 30cm.

I went through Personal Classifier, but was unable to find a solution regarding this matter.

Tape measure ? - seriously...

So the Canvas method would be helpful. The only thing you would have to do is not process the entire photo, but a specific area, if the red bar were always at the same height. It would make the task easier.

You would have to set the photo as the Canvas background. The red stripe must be approximately half the width of the image. You need to do a procedure that checks the color vertically pixel by pixel. If it hits red, it writes the coordinate, it checks further, if red runs out, it also writes the coordinate. Then calculate the mean of these coordinates to find the center of the bar height. Then in the center of the bar, check right and left pixel by pixel how wide the bar is.

@TIMAI2,

Tape measure is difficult when there are hundreds of trees. And also tape measure needs more paper works (to write down details). Each tree is recorded with it's GPS location.

Red bar is always same height. But RGB colors can be changed from left to right with the light direction of the photo. So the RGB values may vary from left to right of the strip. That might cause problems.

I think that taking a photo with a red stripe at an equal distance will take longer than measuring the circumference of the tree manually and entering it manually into the application.

Maybe just a database, e.g. a google sheet to which the application would send the tree location from the location sensor and its diameter entered manually.

No, there are some more tasks to be done. Each tree is labelled with QR code that contains GPS location, tree category, tree name, etc. The app read QR code and save circumference automatically.

Most importantly we need to prevent manual data insertion. When the task is assigned to someone he do not measure the circumference. Except he write downsome value even without going to the location of the tree. If everything is automated they have to go to the location and get a photo. So the actual circumference is recorded.

Thanks @Patryk_F again for your great help.

Special Effects actors wear green suits with ping pong balls attached at critical locations to aid in their motion capture.

Though I wouldn't recommend dressing up your trees in green suits, maybe something could be done with ping pong ball necklaces?

So insert an example photo of such a tree so that someone could test some method.

No photos taken so far. Just planning how we can go ahead with this.

You would have to do some tests. Pure theory is not enough.

If you have a stripe idea, you would need to take a picture like this with whatever tree and target stripe you want. Any tree, not necessarily target tree.

The photo couldn't be too big either. I think the maximum size is HD.

Yes I will get a sample photo and try canvas method as you said.

You want to measure the width of a tree trunk by taking a photo with the Android camera. Maybe.

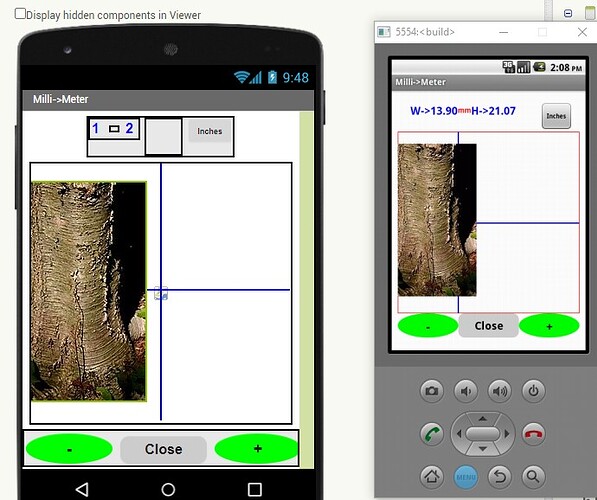

This example in the gallery might give you some alternative ideas MIT App Inventor Gallery As published it needs work to provide usable code for part of your Project.

Here is a tree trunk. Photograph your tree with a ruler in front of the trunk so there is a scale reference.

The picture you take goes to a sprite displaying in the Canvas. Use the cross hairs to 'measure' and create some math to estimate the width of the trunk based on the length of the ruler that you will include in the photo..

I think this is hopeless. The scale of the image you get of the tree trunk in the sprite depends on the distance the photo is taken from the trunk. So you have to 'scale the photo', use the cross hairs to measure etc. Fool-y automated perhaps. Wish you luck. If possible, this is seriously complicated. ![]()

A simple method would be to use large calipers (possibly made of wood) that will display the width measured trunk in the photograph. The scale could be color coded and depending on the color coded width, you could use the facility to check a pixel color to determine width measured in the image and use it to capture the measurement (maybe).

How if I get a picture focusing tree trunk. So the background blurred and tree trunk sharp. If there is a way to detect sharp edges of a picture then we can find out width of the tree trunk. Can somebody help me detect sharp pixels of a picture?

Unfortunately for you, that is impossible without a fancy image manipulation app.

. A pixel is a pixel, it is always 'sharp' See Pixel - Wikipedia . Although a group of pixels can display what appears to be a blurred image, the pixels themselves are always the same shape.

@SteveJG,

Thank you for your reply. Yes what you say is correct. Do you know how an app detect face? Can we use face detection in this app? I mean the logic