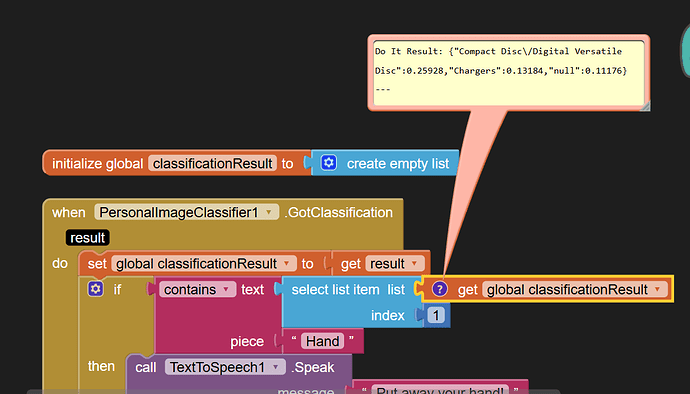

I added a global variable to catch the classification result, to allow showing its value.

See the {} brackets?

That's a dictionary, not a list, so you can't use list blocks on it.

There's also the matter of your expectations from your model.

It has only two non-null categories, both electronic hardware, and neither mentioning hands.

I went to double check the tool tip for the classifier event block:

This is baffling. It says it returns a list of lists, so either my eyes are going, or my mismatched Companion version on my phone is to blame.

I need to sync versions and retest.

Or you could try this on your phone, with the Companion.